Tools like Midjourney and OpenAI’s ChatGPT are bringing the term “generative AI” into mainstream conversations. Artificial intelligence (AI) has existed for years but, as of late 2022, generative AI capabilities are now widespread and accessible to the general public.

It’s tricky to make hard-and-fast predictions about what comes next, but generative AI technologies certainly have the potential to transform the ways we work, how we find information, how we make art, and how we approach entire job markets.

That also has big implications for cybersecurity and how finance leaders should think about their role in mitigating cyber risks. But the best starting point is understanding the meaning of generative AI and the forms it can take.

What is generative AI?

Generative AI is a subset of the wider field known as “artificial intelligence.” AI systems are trained on datasets and designed to use logic for learning, analysing, and applying new information, similar to human cognitive functions.

Where generative AI is distinct is in its creation of new content, like text and images or sound. Generative AI models are trained on large datasets – then, they use that knowledge to generate content that’s entirely new and builds off those original datasets.

Recent instances of generative AI have been able to produce more human-like output than previous versions. This more sophisticated output has some big security implications, but it’s also shaking up everything from academic testing to our understanding of art.

What are ChatGPT, DALL-E and Midjourney?

You've might’ve already heard about these generative AI tools, which are some of the biggest and buzziest examples of this kind of AI. But what are they, exactly?

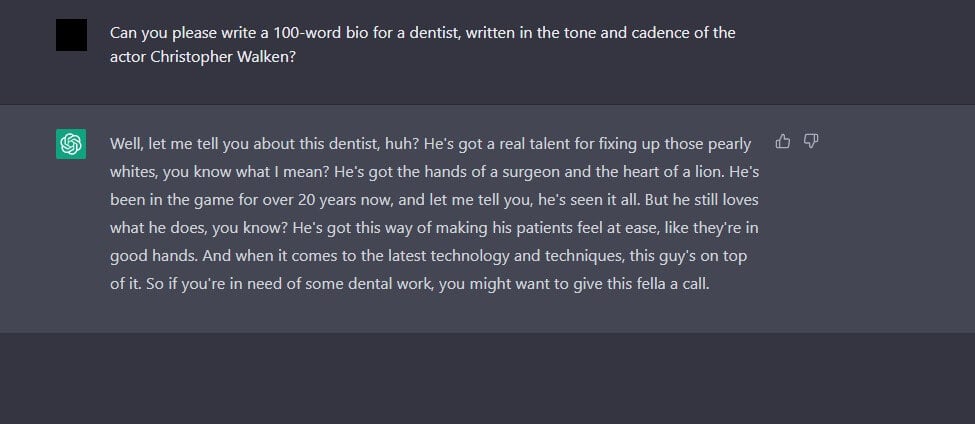

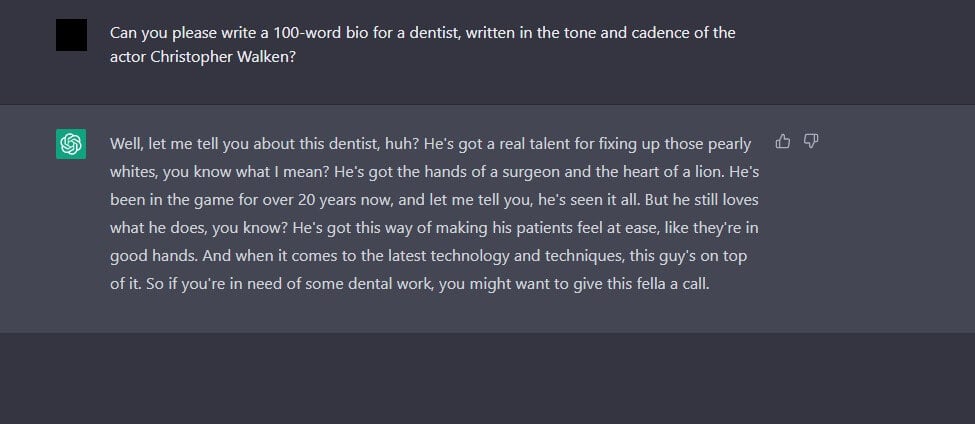

ChatGPT, one of the most popular generative AI tools, is a chatbot created by OpenAI. Interacting with users in a conversational way, ChatGPT is a model trained to generate text from the internet. The chatbot is notable for its detailed, human-like responses and its ability to generate all kinds of content – including generated code.

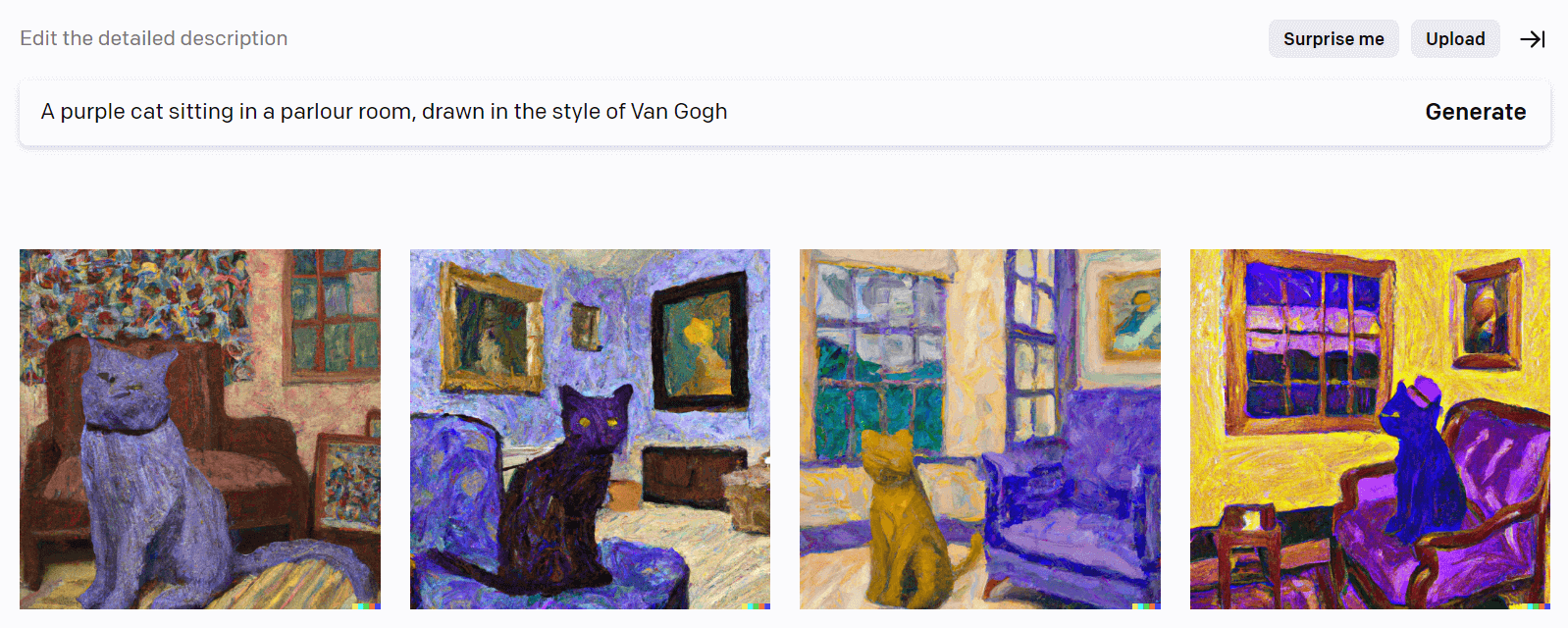

OpenAI is also responsible for the creation of DALL-E, another generative model. However, rather than text-based output, DALL-E generates images from text.

Midjourney is also an AI image generator. Both DALL-E and Midjourney demonstrate the power of generative AI – by training these models on massive datasets, they’re able to respond to plain-language commands and produce new content that’s often indistinguishable from human-created content. In fact, much of Eftsure’s imagery is AI-generated (like this blog’s header!).

With launch dates ranging from late 2022 to mid-2022, these generative AI models are in beta and largely accessible to the general public, continuously learning from the feedback and data fed into them.

How generative AI technologies work

By now, we’ve covered the basics of generative AI and some popular examples of it. So how does it work?

We’ll try to keep this simple. Generative AI uses neural networks, which are basically methods that teach machines to process data similarly to human brains. Two neural networks can form a generative adversarial network (GAN), in which one network generates new data and the other network assesses the quality of that data.

These two networks build off one another, continuously refining the generator network’s output based on feedback from the other network.

This is how GANs can create output that’s sometimes indistinguishable from human-created content.

Types of generative AI

In popular examples of generative AI, we’ve already covered how different applications can produce different types of artificial intelligence output. Now let’s dive a little deeper into the different types of generative AI and the content they can create.

Text generation

From screenplays to two-way dialogue that provides emotional support, ChatGPT’s text-based responses are uniquely human-like. Its capabilities are broad – it can distil information gleaned from across the entire internet, which includes performing tasks like coding or creative writing.

ChatGPT isn’t the only AI-enabled text generator. In fact, other models have been around for a while. But its capabilities have spawned other text generators, with users using OpenAI’s API to build programs like language “re-phrasers.”

Image generation

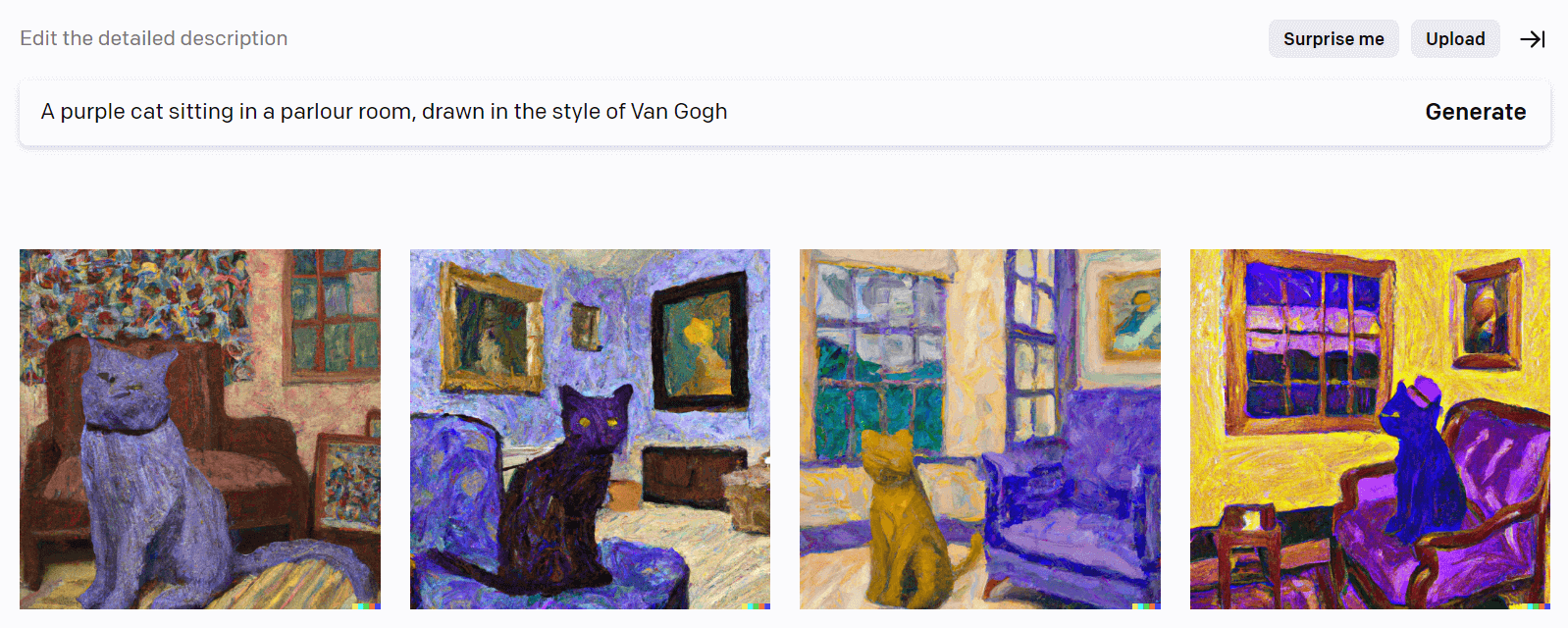

Image generators like Midjourney and DALL-E are trained on existing images and artwork, sometimes controversially. But they’re hardly the only image generators. This form of generative AI uses photography, images, and artwork as datasets to create altogether new imagery based on textual prompts.

Increasingly, these generators can produce any style you want, including 2D, 3D, photorealistic, and various art styles like impressionist or modern.

Style transformations

Remember when we discussed how generative AI models work, with two neural networks forming a GAN? Well, StyleGAN (Style Generative Adversarial Network) builds on this design by proposing larger changes to the generator model.

The end result is a model that can produce highly photorealistic images of faces, as well as the ability to manipulate these images. One application of StyleGAN is the creation of deep fakes. These increasingly convincing images and videos can create the illusion of almost anyone doing or saying anything.

Text-to-speech

Broadly speaking, text-to-speech tools use machine learning algorithms to convert written text into spoken words. This involves several steps, including text normalisation, linguistic analysis, and acoustic modelling. One key component of the process is the generation of speech waveforms, which is where generative AI comes into play.

Large datasets of human speech recordings train generative models, which learn to generate speech waveforms that sound like human speech based on the input text. One example of this is WaveNet, a generative model for raw audio, created by British AI research lab DeepMind. Another is Amazon Polly, a service that converts articles to speech.

What are the limitations of AI models?

Clearly, there have been massive strides in generative AI – and we’re sure to see more. But there are a few limitations to remember when working with generative models.

Data dependency: Like almost any other system or model, the output is only as good as the data you input. As datasets become larger, generative AI outputs tend to improve in quality. But it’s not always easy to access or input such large amounts of data, and it also becomes harder to control what sort of data is going into the model

Unpredictability: Especially when producing complex media like images or music, generative models sometimes find and reproduce patterns in ways that are difficult to understand or control. For example, image generators like DALL-E are impressive, but you’re bound to find yourself asking questions like, “Why is this dog’s nose randomly melting into an ice cream cone?” every now and then

Computationally intensive: Generative models are often more computationally intensive (that is, more dependent on multiple computational resources to function) compared to other types of machine learning models. As a result, they can be slower and more resource-intensive to train and use

A new age of fraud

Over the past decade, technology has already been radically transforming the threat landscape. The growing proliferation of tech-enabled tools means that the barriers to becoming a cybercriminal continue to get lower, while shifts toward hybrid working and dispersed teams mean there are simply larger attack surfaces for criminals to target.

This is where the topic of AI technology and the interests of finance leaders dovetail. Many financially motivated cybercrimes rely on deceiving AP staff and circumventing traditional financial controls. As generative AI technologies improve, that deception will continue to get easier and easier.

Using increasingly sophisticated social engineering tactics and business email compromise (BEC) scams, generative AI can help cybercriminals impersonate trusted contacts, lure employees into giving away sensitive information, and create convincing phishing emails at scale.

This is something leaders should be factoring into strategies, processes and procedures. Cybercriminals are always hunting for new ways to leverage technology – but so can finance leaders. By aligning your cybersecurity strategy with updated, technology-enabled financial controls, you can drive a comprehensive approach that protects your organisation from the many direct and indirect costs of cybercrime.